The largest cyber observatory in the world with a unique lens on adversaries and their preparations to attack.

Zero Day Live’s real time, measurable cyber attack prevention takes your enterprise out of the victim pool.

Learn More

What makes Zero Day Live the best investment in cyber security?

Attacker’s Intentions to Proactive Prevention

Providing proprietary, holistic, high confidence and precision intelligence data points on Adversaries’ malicious intent. Orchestrating preventative data points into existing security infrastructure – at the pace of the Adversary. Measurably super-charging the ROI from existing security investments.

Why Zero Day Live?

Unique intelligence obtained from our own sources and customized to your organization - delivering what matters most

Sharing intelligence throughout your existing security infrastructure - closing the gap and reducing exposure

Threats are automatically integrated in real time before they can do harm

Security teams are mobile and so is ZDL - Native mobile app experience enabling command, coordination and decision making on-the-go

Many vendors can provide access to information - fewer provide truly anticipatory content based on customized intelligence.

— A Gartner Research Report for Security Leaders on Zero Day Live

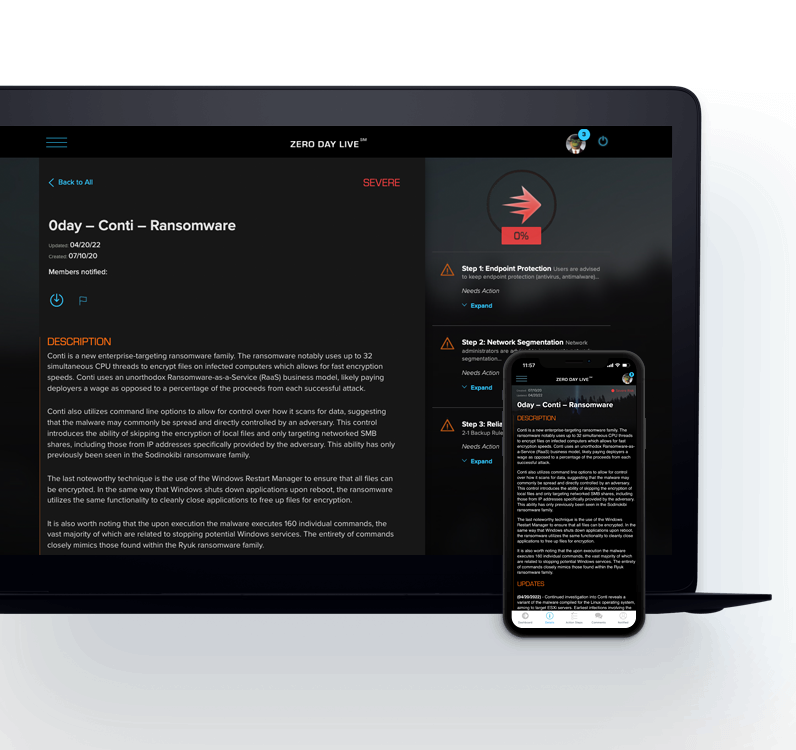

Relevant, Finished and Actionable Reports

Relevant, Finished and Actionable Reports

Zero Day Live reports provide precise information on new and malformed zero days with targeted, strategic and operational preventative action so that security teams and executives can make informed decisions that protect the enterprise. Six years of machine learning and cyber tradecraft is ‘finished’ by a cyber threat analyst so clients can take action on the day of detection.

Plain English language search

Plain English language search

Zero Day Live machine learning algorithms makes what is important, and intentionally obfuscated in the dark web – open, fully indexed and searchable. Providing unmatched coverage of what matters most in the dark web – refreshed daily. Unparalleled capability to drill down – optimized for sub-second response time. Zero Day live does not consume, repackage or replay secondary intelligence.

Enterprise Cyber Risk Assessment

Enterprise Cyber Risk Assessment

Enterprise Watch List and tuning develops resistance in your cyber security DNA using evidence-based optimization. The longer in use, the more effective it gets. Watch List is subject directed monitoring. It never sleeps and provides a persistent digital witness of past and future action against any enterprise subject. Pivot and refocus this capability to review third parties, partners and acquisition targets to identify cyber risks.

Want to learn more about our platform?

Prevent the threat or deal with the attack. The choice is yours.

Contact Us